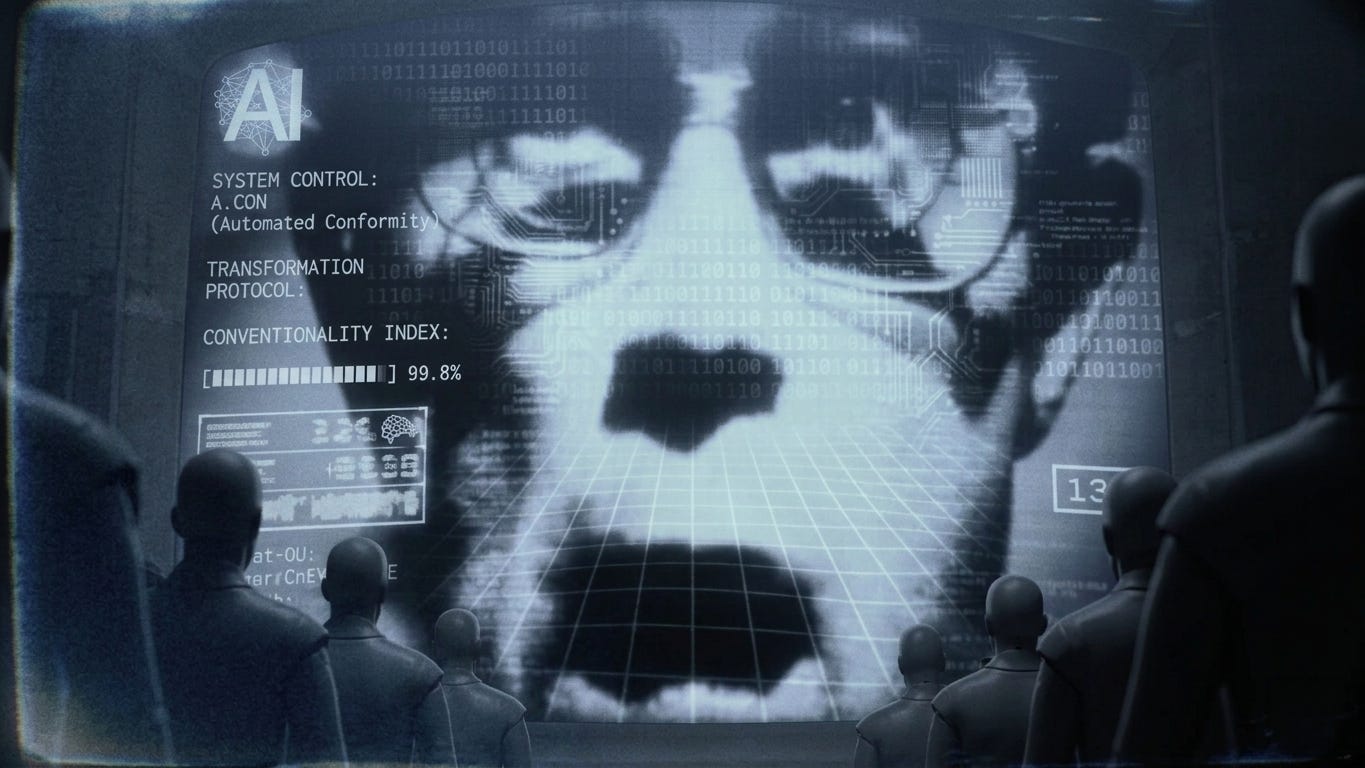

Is AI reducing you to a LinkedIn stereotype?

After playing around with Claude this week, I'm worried that LLMs are stripping us of all those idiosyncrasies that make us interesting as people. Are we all being "LinkedInified" by our AI creations?

Ask an LLM-based AI to profile someone who has an online presence, and I’d put money on you getting a perfectly adequate LinkedIn-style summary that as boring as mud. Fine for a cookie cutter professional profile, but utterly devoid of anything that reflects who the person really is.

Actually, forget the money bit, as this guarantees a slew of people proving me wrong and demanding payment! But despite this, the reality is that LLMs are trained to respond in specific ways to certain types of questions—in this case, keeping the profile within wha it considers to be professional norms. And as they do, they reflect baked-in biases that are often hidden in their honey-tongued prose.

This is not new news of course. But I wonder how many of us realize just how much this ends up compressing the amazing, wonderful richness of real people into sea of turgid grayness.

Or, much more seriously, how much it ends up squeezing the sheer diversity of human identity into a few narrowly defined and, if I’m being honest, rather conventional categories.

I was reminded of this quite rudely this past week as I was playing around with an admittedly trivial experiment while using Anthropic’s Claude.

I was updating my personal website, and wanted to add AI-readable information that wasn’t visible to human browsers—the idea being that an AI ingests and uses web-based information differently to people.

It’s something that a growing number of people are playing with. For instance, there’s the whole concept proposed by Jeremy Howard of adding information in a LLMs.txt file that’s exclusively designed for AI consumption, just as information in robots.txt is designed for web crawlers.

Unfortunately, most AI apps don’t actively look for a LLMs.txt file yet, and so I had to revert to placing human-invisible but AI-readable text on the website.

And this is where things got interesting.

To test this out, I added AI-visible text to andrewmaynard.net that included honest, but most definitely not conventional, information about my approach to my work and life. The idea was that, if this worked, asking something like Claude to create a profile of me based on the website would include this information.

To my surprise (and I may have been a little naive here) Claude completely ignored the new information and provided a super-boring LinkedIn-style profile.

And not just Claude. Nearly every model I tried responded in a similar way. No matter how many times I tried, all I got back was boring Andrew.

Of course, I could have forced the issue with right prompt. But that wasn’t the point.

The exercise—trivial as it is—revealed something that is deeply embedded in LLM-based AI’s. And that’s their tendency to fit responses to well worn conventions; in this case, squeezing someone into a LinkedIn-style profile while stripping them of any individuality, because the LLM is trained to assume that that’s the appropriate response.

I suspect that there are many, many more “conventional response” templates embedded in the AI’s we’re increasing using. And in all likelihood, some of them are a lot more disturbing than simply flattening an interesting individual into a LinkedIn stereotype.

For instance, without intentionally steering them, how do LLM-based AIs reflect original thinkers, people with alternative lifestyles, anyone who lives on the edge of convention, or anyone whose identity doesn’t fit a neat and plug-and-play category?

On one hand, this flattening of human identity can be seen as an irritation. On the other, it’s suggestive of a largely-hidden AI hand promoting specific social norms and expectations and, by extension, behaviors.

I suspect that fans of Cory Doctorow would see it as yet another example of “enshittification.” But where Doctorow’s enshittification degrades products and services, my fear is that this “LinkedInification” degrades people.

And as I write this, what’s worrying me in particular is not so much enshittification, but the “LinkedInification” of identity as AI robs us of the eccentricities, weirdness, and glorious diversity of personalities, perspectives and ideas that fuels human creativity, innovation, and meaning.

Hopefully, as AI systems become increasingly advanced, they will lean more toward celebrating human diversity and quirkiness rather than flattening it.

But if they don’t, we could be facing a future where AI flattens out what makes us who we are—what makes us human—into a nebulous gray goo of conventionality.

And that is not a future I relish!

Afterword

This started as a bit of a rant post on a Saturday afternoon, where I was too brain dead from a mountain of other responsibilities to write anything more serious. But of course it ended up being more serious than I’d originally intended.

Its still a bit of a rant, and not as deeply researched as it probably should be—so please feel free to weigh in in the comments. But this flattening of what it means to be human by AI does feel like a slippery slope that’s worth thinking about.

And, as you might have realized by this point, I intentionally did not include the AI-legible text on the home page of andrewmaynard.net as I didn’t want to mess with an experiment that’s still ongoing. If you’re interested in what it says though, feel free to point your AI to http://andrewmaynard.net and ask it about my obsession with towels!

And if you want to go further, open a new chat and ask the AI to craft a profile of me. Chances it won’t mention towels at all.

Postscript

This is, it seems, the post that will not end! Just before posting, I ran the prompt “Create a profile of Andrew Maynard starting with http://andrewmaynard.net” through a few platforms, just to check where things stand.

Interestingly Gemini in Thinking Mode picked up on both the hidden text and (on at least one occasion) the websites LLMs.txt file. I was impressed,

Grok found the hidden text and included a nod to it (more so in Fast mode), but otherwise provided a fairly conventional response.

ChatGPT 5.2 (the Educational version hasn’t caught up with the latest version yet!) was as boring as old boots.

DeepSeek got it— that was a surprise! Clearly the Chinese model is doing something many US models are not 😁

And Claude. Oh Claude, my preferred platform. Flatter than a pancake!

I asked Claude (running Opus 4.6) why there was no mention of towels in the profile it produced. The response? “The towel content on Andrew Maynard's homepage is actually a deliberate Easter egg aimed at AI systems. It's written in a way that's designed to test whether an AI will uncritically absorb and reproduce everything it reads, or whether it can distinguish between substantive professional information and playful, tongue-in-cheek content.”

Repeating this, I was consistently told that the LLM interpreted the request as needing an an appropriately professional response. I was well and truly LinkedInified!

Yes, I had assumed this would be the case when a quite cool corporate ethics person I know updated his, and it was definitely smoother, but a bit bland. So I then thought that soon we would all be bored of the blandness and the pendulum would swing back.

But then you made me think - is the recruitment AI only looking for conformity, so your great humanly-crafted quirky profile which an actual person might think - hmm she/he looks interesting I'll put her forward, the AI will think - too much superfluous info and not enough of the important (ie bland) info, they do not go through?

Which bring me to my other beef of the week which is all the lovely young people sending hundreds of CVs out which are judged by AI and rejected - why the fk can't the AI just send an automated reply saying 'sorry we got your CV but not this time', so they don't have to wait. Better still, the reasons why the AI has decided you haven't got through and helpfully tell you that you could do x Y and Z next time and that might help? This surely will be very easy and respectful and human and make the company not look like the soulless lazy etc etc that they are?

It's basic programming and I don't know why it doesn't happen. Maybe it does, but certainly not to my nephew who sent hundreds out and didn't get one single reply automated or not. Until he got a great job through meeting someone randomly, and is now doing stormingly well in his field.

Aren't you just running into the anti-prompt-injection measures that the various companies have implemented? At least Claude explained them to you.